6.4 Overlapping GPU and CPU Execution

Published on 2018-06-20 | Category: CUDA , Freshman | Comments: 0 | Views:

Abstract: This article introduces how to overlap GPU and CPU execution.

Keywords: Overlapping GPU and CPU Execution

Overlapping GPU and CPU Execution

In addition to overlapping data transfer and kernel execution discussed above, another major concern is using the GPU while the CPU also performs computation. This is the focus of this article.

The example process in this article is as follows:

- Kernels are dispatched to their respective streams

- The CPU computes while waiting for events

The specific code is as follows:

cudaEvent_t start,stop;

cudaEventCreate(&start);

cudaEventCreate(&stop);

cudaEventRecord(start,0);

for(int i=0;i<N_SEGMENT;i++)

{

int ioffset=i*iElem;

CHECK(cudaMemcpyAsync(&a_d[ioffset],&a_h[ioffset],nByte/N_SEGMENT,cudaMemcpyHostToDevice,stream[i]));

CHECK(cudaMemcpyAsync(&b_d[ioffset],&b_h[ioffset],nByte/N_SEGMENT,cudaMemcpyHostToDevice,stream[i]));

sumArraysGPU<<<grid,block,0,stream[i]>>>(&a_d[ioffset],&b_d[ioffset],&res_d[ioffset],iElem);

CHECK(cudaMemcpyAsync(&res_from_gpu_h[ioffset],&res_d[ioffset],nByte/N_SEGMENT,cudaMemcpyDeviceToHost,stream[i]));

}

//timer

CHECK(cudaEventRecord(stop, 0));

int counter=0;

while (cudaEventQuery(stop)==cudaErrorNotReady)

{

counter++;

}

printf("cpu counter:%d\n",counter);

Complete code on GitHub: https://github.com/Tony-Tan/CUDA_Freshman (Stars are welcome!)

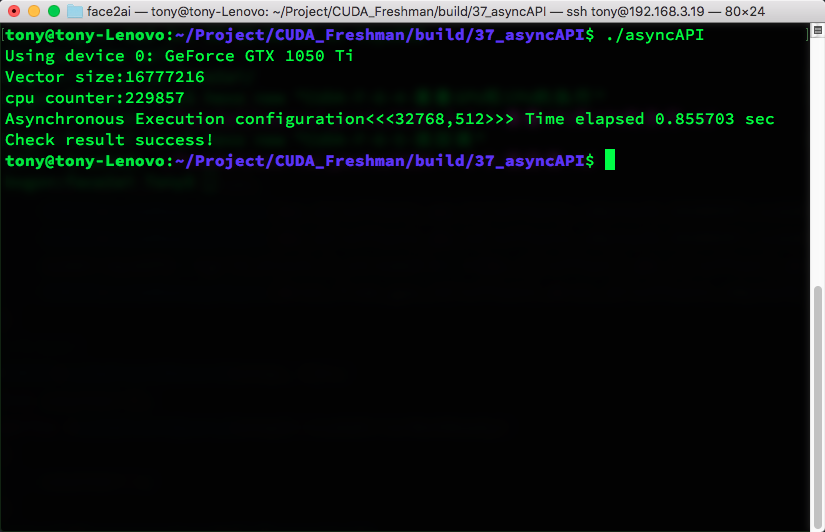

The result is:

As we can see, before the stop event executes, the CPU is continuously working -- achieving a parallel effect.

The key point in the code is:

cudaEventQuery(stop)

which is non-blocking. Otherwise, the CPU computation cannot continue.

Summary

This article is short, but as a very good example, it demonstrates parallelism between GPU and CPU.