2.1 CUDA Programming Model Overview (Part II)

2018-02-16 | CUDA , Freshman | 0 |

Abstract: This article continues from the previous one, covering kernel functions and error handling in the CUDA programming model. Keywords: CUDA Kernel Function, CUDA Error Handling

CUDA Programming Model Overview (Part II)

Continuing with the second half of the CUDA programming model -- kernel functions and error handling.

- Kernel functions

- Launching kernel functions

- Writing kernel functions

- Validating kernel functions

- Error handling

Kernel Function Overview

A kernel function is that piece of serial code running among the many threads in the CUDA model. This code runs on the device, is compiled by NVCC, and produces machine code for the GPU. So writing CUDA programs is essentially writing kernel functions. The first step is to ensure the kernel function runs correctly and produces correct results. The second step is optimizing the CUDA program -- whether optimizing algorithms or adjusting memory structure and thread structure, all optimizations involve modifying the code within the kernel function.

We've always treated our CPU as a controller. Running a kernel function must be initiated from the CPU, so let's start learning how to launch a kernel function.

Launching Kernel Functions

Kernel functions are launched using the following ANSI C-extended CUDA C instruction:

kernel_name<<<grid, block>>>(argument list);

The standard C prototype is simply a C function call:

function_name(argument list);

The triple angle brackets <<<grid, block>>> configure the thread structure for device code execution (or simply put, configure the kernel). This refers to the grid and block we mentioned in the thread structure from the previous article. Recall that we use CUDA C's built-in data type dim3 variables to configure grid and block (mentioned above: on the device side, the data types for accessing grid and block properties are uint3, which are unmodifiable constant-type structures -- emphasizing this again here).

By specifying the dimensions of grid and block, we can configure:

- The number of threads in the kernel

- The thread layout used in the kernel

We can configure kernels using dim3-type grid and block dimensions, or we can use int-type variables or constants for direct initialization:

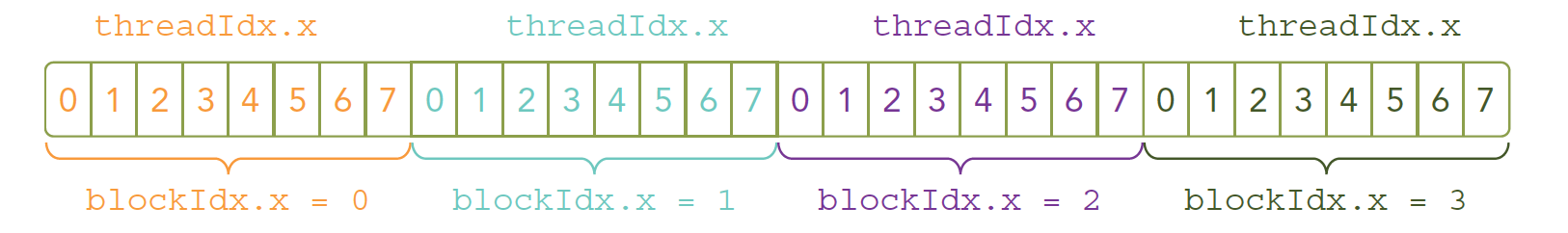

kernel_name<<<4, 8>>>(argument list);

The thread layout for the above instruction is:

Our kernel function is simultaneously copied to multiple threads for execution. As mentioned earlier, multiple threads executing on the same data is a waste of time. So to make threads correspond to different data as we intend, we need to give each thread a unique identifier. Since device memory is linear (basically all memory hardware on the market stores data in linear form), looking at the diagram above, we can combine threadIdx.x and blockIdx.x to obtain the corresponding thread's unique identifier (later we'll see that threadIdx and blockIdx can be combined in many different ways).

Now it's time to modify our code -- changing the kernel configuration to produce code that yields the same results but with different efficiency:

- One block:

kernel_name<<<1, 32>>>(argument list);

- 32 blocks:

kernel_name<<<32, 1>>>(argument list);

The above code, if there's no special structure in the kernel function, should produce identical results, but efficiency will differ.

The above covers the launching part. When the host launches a kernel function, control immediately returns to the host -- the host does not wait for the device to finish the kernel. We mentioned this in the previous article (the line that needs to be added after waiting for hello world output).

To make the host wait for the device to execute, use the following function:

cudaError_t cudaDeviceSynchronize(void);

This is an explicit method. There's also an implicit method, where the host doesn't explicitly state it's waiting for the device, but the device won't finish and the host can't proceed -- for example, the memory copy function:

cudaError_t cudaMemcpy(void* dst, const void* src,

size_t count, cudaMemcpyKind kind);

This function was introduced above. When the instruction immediately following the kernel launch copies data from device to host, the host must wait for the device computation to complete.

All CUDA kernel launches are asynchronous -- this is completely different from C.

Writing Kernel Functions

We can launch kernel functions, but where do they come from? We write them, of course. A kernel function is also a function, but declaring a kernel function follows a somewhat templated pattern:

__global__ void kernel_name(argument list);

Note: Declaration and definition are different -- CUDA is consistent with C in this regard.

The qualifier __global__ doesn't exist before C functions. CUDA C has some other qualifiers not found in C, as follows:

| Qualifier | Execution | Call | Notes |

|---|---|---|---|

| global | Device side | Can be called from host or from device with compute capability 3+ | Must have void return type |

| device | Device side | Device-side call | |

| host | Host side | Host-side call | Can be omitted |

There's a special case where some functions can be simultaneously defined as __device__ and __host__. Such functions can be called by both device and host code. Host-side code calling a function is normal; device-side function calling is consistent with C, but the function must be declared as device-side code to tell nvcc to compile it into device machine code. If a function is declared for both host and device, the compiler must generate two different sets of machine code.

Kernel function writing has the following restrictions:

- Can only access device memory

- Must have void return type

- Does not support variable number of arguments

- Does not support static variables

- Exhibits explicit asynchronous behavior

A common phenomenon in parallel programs is parallelizing serial code blocks -- specifically, parallelizing for loops.

For example:

Serial:

void sumArraysOnHost(float *A, float *B, float *C, const int N) {

for (int i = 0; i < N; i++)

C[i] = A[i] + B[i];

}

Parallel:

__global__ void sumArraysOnGPU(float *A, float *B, float *C) {

int i = threadIdx.x;

C[i] = A[i] + B[i];

}

These two simple code segments show what for-loop unrolling parallelization looks like. We can roughly see the parallelization approach.

Validating Kernel Functions

Validating a kernel function means verifying its correctness. The following code appeared above but also contains the method for validating kernel functions:

Code repository: https://github.com/Tony-Tan/CUDA_Freshman

/*

* https://github.com/Tony-Tan/CUDA_Freshman

* 3_sum_arrays

*/

#include <cuda_runtime.h>

#include <stdio.h>

#include "freshman.h"

void sumArrays(float * a, float * b, float * res, const int size)

{

for(int i = 0; i < size; i += 4)

{

res[i] = a[i] + b[i];

res[i+1] = a[i+1] + b[i+1];

res[i+2] = a[i+2] + b[i+2];

res[i+3] = a[i+3] + b[i+3];

}

}

__global__ void sumArraysGPU(float *a, float *b, float *res)

{

int i = threadIdx.x;

res[i] = a[i] + b[i];

}

int main(int argc, char **argv)

{

int dev = 0;

cudaSetDevice(dev);

int nElem = 32;

printf("Vector size:%d\n", nElem);

int nByte = sizeof(float) * nElem;

float *a_h = (float*)malloc(nByte);

float *b_h = (float*)malloc(nByte);

float *res_h = (float*)malloc(nByte);

float *res_from_gpu_h = (float*)malloc(nByte);

memset(res_h, 0, nByte);

memset(res_from_gpu_h, 0, nByte);

float *a_d, *b_d, *res_d;

CHECK(cudaMalloc((float**)&a_d, nByte));

CHECK(cudaMalloc((float**)&b_d, nByte));

CHECK(cudaMalloc((float**)&res_d, nByte));

initialData(a_h, nElem);

initialData(b_h, nElem);

CHECK(cudaMemcpy(a_d, a_h, nByte, cudaMemcpyHostToDevice));

CHECK(cudaMemcpy(b_d, b_h, nByte, cudaMemcpyHostToDevice));

dim3 block(nElem);

dim3 grid(nElem / block.x);

sumArraysGPU<<<grid, block>>>(a_d, b_d, res_d);

printf("Execution configuration<<<%d,%d>>>\n", block.x, grid.x);

CHECK(cudaMemcpy(res_from_gpu_h, res_d, nByte, cudaMemcpyDeviceToHost));

sumArrays(a_h, b_h, res_h, nElem);

checkResult(res_h, res_from_gpu_h, nElem);

cudaFree(a_d);

cudaFree(b_d);

cudaFree(res_d);

free(a_h);

free(b_h);

free(res_h);

free(res_from_gpu_h);

return 0;

}

During the development phase, verifying each step is absolutely more efficient than writing all features first and then testing. The same applies to writing CUDA -- testing each small code block may seem slow, but it actually improves overall efficiency significantly.

CUDA tip: when debugging, you can configure the kernel to run as a single thread:

kernel_name<<<1, 1>>>(argument list)

Error Handling

All programming requires error handling. Early coding errors are caught by the compiler. Memory errors can be observed. But some logical errors are hard to find, sometimes not discovered until the program goes live. Some serious bugs are difficult to reproduce -- they don't always appear but are fatal. Moreover, CUDA executes asynchronously, so when an error occurs, it's not necessarily triggered by which specific instruction -- this is very frustrating. This is where we need defensive error handling, like this macro in our code repository's header file:

#define CHECK(call)\

{\

const cudaError_t error = call;\

if(error != cudaSuccess)\

{\

printf("ERROR: %s:%d,", __FILE__, __LINE__);\

printf("code:%d,reason:%s\n", error, cudaGetErrorString(error));\

exit(1);\

}\

}

This gets the return result after each function executes and handles unsuccessful results. Every CUDA C API call returns an error code that we can make good use of. Of course, this can be removed in release builds, but it must be present during development.

Compilation and Execution

Next, let's compile and execute the vector addition code from above and observe the results.

Compilation command:

nvcc xxxx.cu -o xxxx

Summary

This article was much smoother to write than the previous ones because the knowledge here is very coherent, unlike the overview articles with their scattered topics. We'll continue tomorrow.